Most teams don’t fail at content analysis qualitative because they lack data. They fail because they start coding before they decide what kind of meaning they’re actually trying to extract. I’ve watched smart product teams burn 40 hours tagging interview transcripts, app reviews, and support tickets, then realize they mixed surface complaints, inferred motivations, and frequency counts into one mushy framework that nobody trusted.

The painful part is that qualitative content analysis is supposed to be systematic. Done well, it gives you a repeatable way to analyze text, audio, or visual material and turn it into categories, themes, and decisions. Done badly, it becomes aesthetic highlighting with a research vocabulary.

Premature coding creates false confidence. Teams often jump straight into labels like “confusing UX,” “pricing issue,” or “trust concerns” without defining the unit of analysis, the question they’re answering, or whether they’re analyzing manifest content or latent content.

That sounds small, but it changes everything. Manifest content is the explicit, visible meaning in the data: the user says “I couldn’t find the export button.” Latent content is the underlying meaning: the product violates the user’s expectation of where high-value actions should live. If you blend those levels, your dataset becomes impossible to compare across coders.

I saw this on a 12-person B2B SaaS team running 28 onboarding interviews after activation rates dropped. Product wanted “themes,” support wanted “top complaints,” and growth wanted “what blocks conversion.” We coded all three into the same spreadsheet, and by week two, we had 67 codes, three competing decks, and zero decision clarity. The fix was embarrassingly simple: separate explicit friction from inferred cause, then rebuild the coding frame around one decision — why trial users stalled before first value.

The other failure is treating all content analysis as one method. It isn’t. There are three different approaches, and each one answers a different kind of question.

Pick the method based on theory maturity, not preference. If you don’t know what patterns exist, start inductive. If you’re testing an existing framework, go deductive. If word use itself is meaningful, use summative analysis.

Most product teams should not default to summative analysis. Counting terms like “slow,” “buggy,” or “expensive” looks rigorous, but frequency alone rarely explains behavior. I’ve seen one checkout study where “confusing” appeared only six times across 54 interviews, while hesitation patterns around fee disclosure showed up everywhere in context — and that latent pattern was the real churn driver.

Directed analysis is also overused. Teams love starting with pre-built buckets like usability, value, trust, and support because it feels efficient. In practice, those buckets often flatten the surprising stuff. If users keep talking about “risk to reputation” when adopting an AI feature, and your framework only has “trust,” you miss the sharper insight.

If you need a broader foundation for where content analysis fits, I’d start with this guide to qualitative data analysis. Content analysis is one method inside a bigger analysis toolkit, not a replacement for all of it.

Your unit of analysis determines whether your findings will hold up. Before you code a single sentence, decide what counts as one analyzable unit: a word, phrase, sentence, full response, episode in an interview, or entire document.

This is where many teams quietly ruin reliability. If one researcher codes at sentence level and another codes at story level, agreement rates become meaningless. You’re not disagreeing on interpretation; you’re working on different objects.

I learned this the hard way on a consumer fintech study with 42 app store reviews, 300 open-ended survey responses, and 18 churn interviews. We were under pressure to deliver in five days, so we skipped formal unit definitions and let three people “tag what felt relevant.” The result looked rich but collapsed under scrutiny because one person coded emotions, another coded product issues, and I coded moments of expectation failure. Since then, I’d rather spend 90 minutes tightening the codebook than lose two days reconciling chaos.

If your raw material is survey text rather than interviews, this guide to analyzing open-ended survey responses is the more precise playbook.

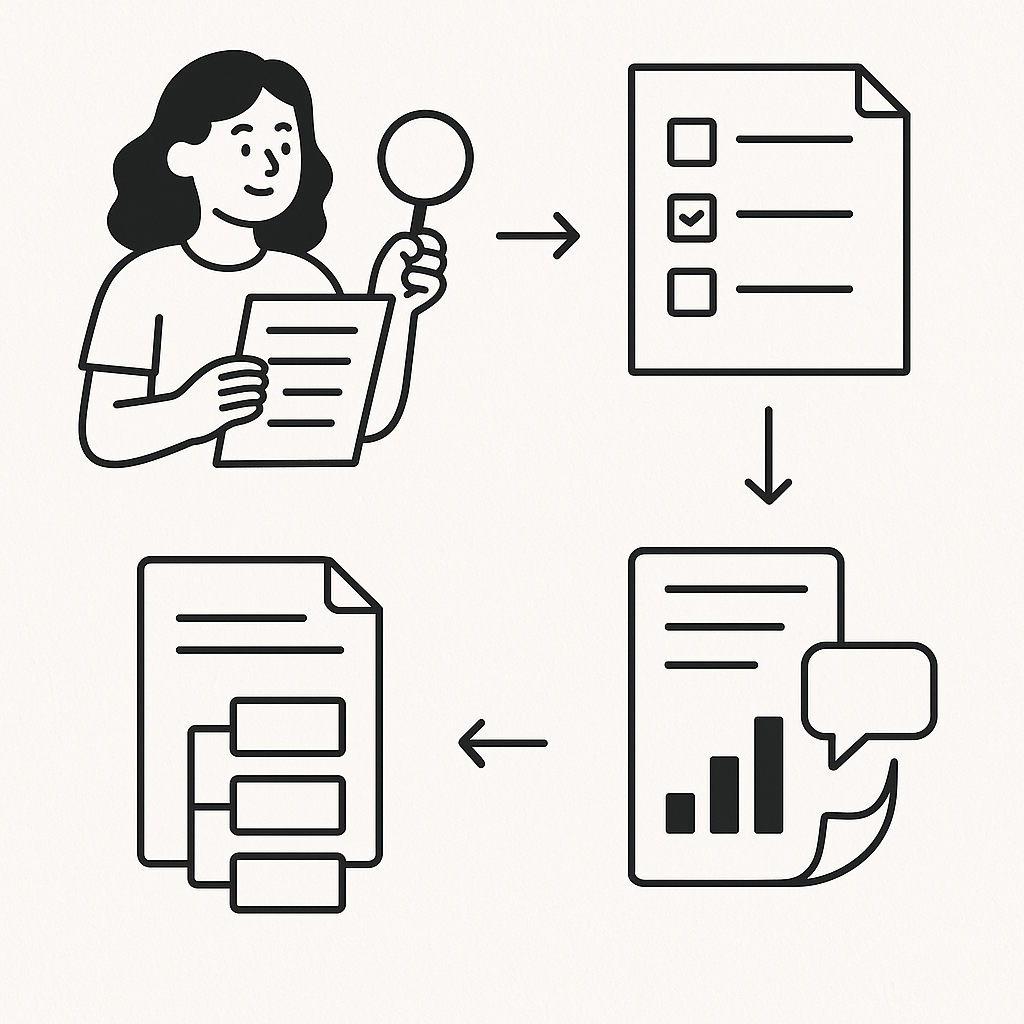

Qualitative content analysis is iterative, but it should not be vague. The process is a sequence: sample well, code consistently, collapse codes into categories, interpret themes, and stop when additional data no longer changes the structure in a meaningful way.

The biggest mistake in this stage is rushing from codes to themes. Codes are labels. Categories are structured groupings. Themes are interpretive claims. If you skip the middle layer, your findings become inspirational but flimsy.

Sampling matters more than most people admit. If your content only reflects vocal power users or recent churners, your categories will be skewed from the start. For that reason, I often pair content analysis with a tighter recruitment strategy like purposive sampling instead of broad convenience sampling.

Adding more researchers does not automatically make analysis more rigorous. It often makes disagreement explode unless the codebook is precise and the team calibrates on the same sample.

Inter-rater reliability matters, especially when findings will influence high-stakes product or market decisions. But teams misuse it by calculating agreement on a messy framework and calling low alignment “the richness of qualitative work.” No — low alignment usually means your code definitions are weak, overlapping, or operating at mixed levels of abstraction.

On a 9-person marketplace team, I ran a directed content analysis on 36 seller interviews to validate an existing trust framework. We had a brutal constraint: leadership review in four business days, with two PMs helping code who had never done formal qualitative analysis. Our first pass agreement was terrible because “trust issue” swallowed everything from payout timing to fear of buyer retaliation. We rewrote the codebook into concrete event-based codes, reran a 20% overlap sample, and ended with a framework the team could actually use for roadmap decisions.

Content analysis also gets confused with thematic analysis and grounded theory. Thematic analysis is broader and often more interpretive; content analysis is more structured and category-driven. Grounded theory goes further — it aims to build theory from data through constant comparison and theoretical sampling, not just organize content into meaningful patterns.

The manual bottleneck in content analysis is coding at volume. Reading 200 interviews, 3,000 survey responses, or 8,000 app reviews line by line is not noble. It’s slow, expensive, and often inconsistent.

This is where I think most research teams should be more pragmatic. AI can dramatically speed up the coding and theme-identification step if you keep tight researcher control over the framework, validate outputs on a subset, and separate machine pattern detection from human interpretation.

That’s exactly why I recommend using better qualitative analysis software instead of brute-force spreadsheets. And if you’re running interview-led studies, Usercall is the rare tool I’d actually trust in the workflow: it runs AI-moderated interviews with deep researcher controls, analyzes qualitative data at scale, and lets you trigger user intercepts at key product moments so you can connect the metric drop to the user’s own explanation of why it happened.

That combination matters. Traditional content analysis usually starts after the fact, once the damage is done and you’re drowning in transcripts. Usercall shortens that cycle by collecting research-grade qualitative input continuously and automating the first-pass coding and pattern detection across interviews, reviews, and survey responses — while still letting the researcher make the final analytical call.

The practical takeaway is simple: choose the right type of content analysis, define your unit of analysis before coding, keep manifest and latent meaning separate, and treat reliability as a design problem rather than a statistical afterthought. If you do that, content analysis stops being a tedious documentation exercise and becomes what it should be — a fast, defensible way to explain behavior in human terms.

Related: Qualitative Data Analysis: A Complete Guide for Researchers and Product Teams · Stop Wasting Weeks Coding: The Best Computer Programs for Qualitative Data Analysis (and What Actually Works) · How to Analyze Open-Ended Survey Responses (Without Reading Every One) · Purposive Sampling: A Complete Guide for Qualitative Researchers (2026)

Usercall helps me do the part of qualitative research that usually eats the calendar: collecting and analyzing high-volume interview data without losing depth. If you want AI-moderated user interviews with research-grade analysis and tight researcher control, Usercall is the most practical way I’ve seen to scale qualitative insight without handing the work to an agency.