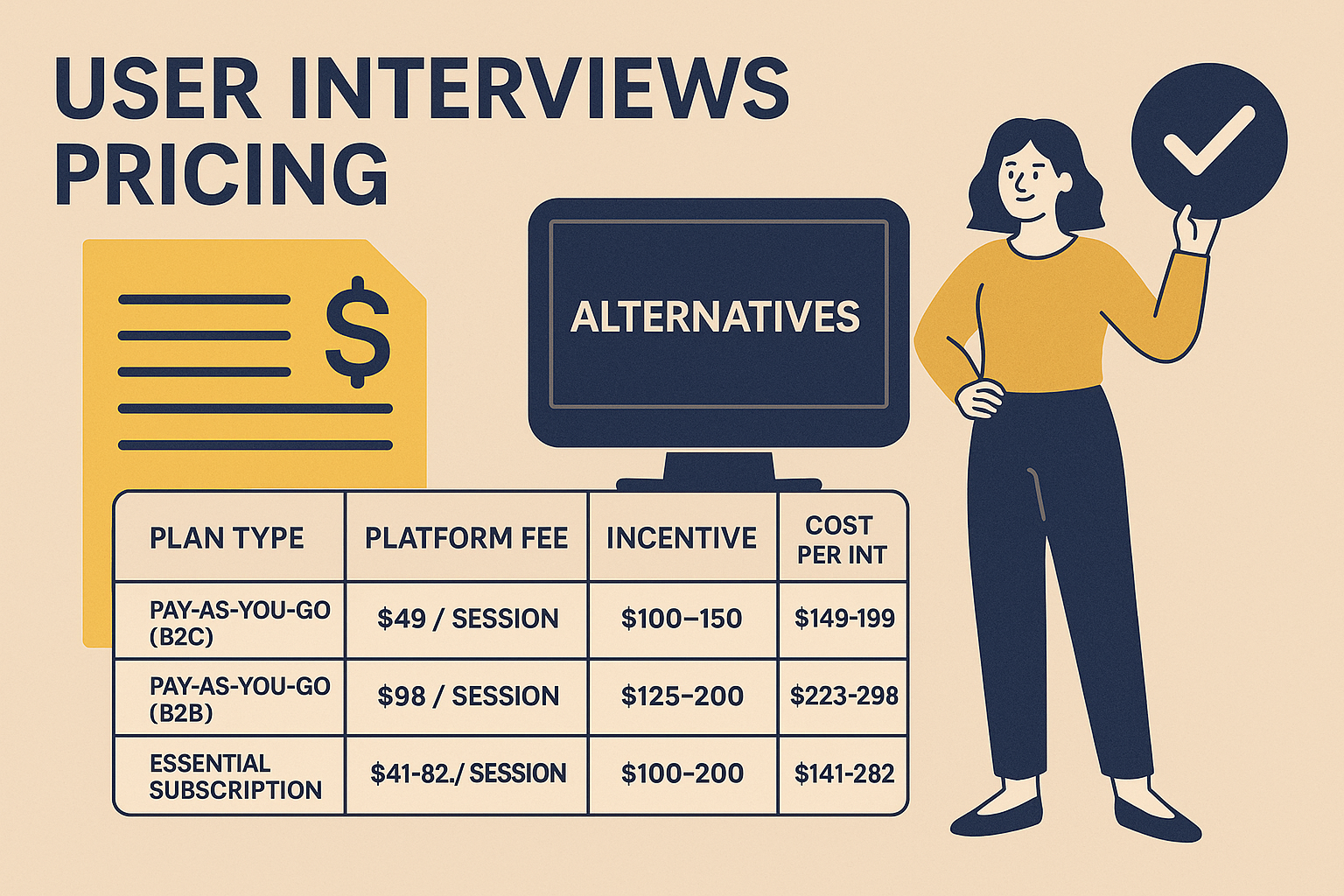

If you want a clean, practical breakdown, here it is (still thinking in total cost per completed interview, not just platform fees).

Pricing breakdown based on total cost per completed interview, not just platform fees.

| Plan Type | Platform Fee | Incentive (Typical) | Total Cost per Interview |

|---|---|---|---|

| Pay‑As‑You‑Go (B2C) | $49 / session | $100–150 | ~$149–199 |

| Pay‑As‑You‑Go (B2B) | $98 / session | $125–200 | ~$223–298 |

| Essential Subscription | $41–82 / session | $100–200 | ~$141–282 |

| Professional Subscription | $36–72 / session | $100–200 | ~$136–272 |

| Custom | Custom ($30–75 est.) | $100–200 | ~$130–275 |

These estimates assume you’re using User Interviews for panel access, screening, scheduling, and incentive handling.

The part people underestimate: platform + incentive is not your full cost. Coordination time, moderation time, transcription, and analysis can easily add $100+ per session (and much more if it’s a senior researcher or you’re doing rigorous coding).

| Platform | Interview Type | Pricing Structure | Strengths |

|---|---|---|---|

| User Interviews | Live moderated | $49–$98/session + incentive | Large panel, strong for niche B2B recruitment |

| UserCall | Async AI-moderated voice | $99–$299/month (flat rate) | No scheduling, scalable, transcripts + themes + summaries |

| Respondent | Live moderated | 50% of incentive (min $40) | Strong for professional/B2B recruiting |

| UserTesting | Moderated + unmoderated | $30k+/year (enterprise) | Usability tasks at scale, video recordings, enterprise workflow |

| PlaybookUX | Moderated + unmoderated | Starts ~ $267/mo | Good value, video interviews + scheduling, lightweight ops |

| Maze | Unmoderated | $1,500–15,000/year | Fast prototype/flow testing with analytics |

| Lyssna | Unmoderated | From $89/month | Quick preference tests and directional feedback |

If your team is tired of scheduling loops, no-shows, and manual analysis, UserCall flips the model:

Best for: product and UX teams needing fast turnaround, early validation, continuous discovery, churn/NPS follow-ups, and “always-on” qualitative insight.

Strong for professional recruitment.

Best for: high-value, hard-to-reach professionals for live interviews.

Enterprise-grade usability and reaction testing.

Best for: enterprise UX orgs running constant usability testing.

Good “all-around” option with more approachable pricing.

Best for: startups or agencies that want a single tool for interviews + usability.

Both are excellent for rapid, unmoderated UX feedback.

Best for: designers/PMs who need speed and direction, not deep interviews.

| Your Goal | Best Option | Why |

|---|---|---|

| Live, in-depth interviews with niche users | User Interviews / Respondent | Best panel access for hard targets + classic moderation |

| Async, fast insights with near-zero ops | UserCall | No scheduling, no moderation, auto themes + summaries |

| Remote usability testing | UserTesting / Maze | Task flows, screen capture, scalable unmoderated UX |

| Budget-friendly mix of moderated + unmoderated | PlaybookUX | Good balance of cost and capability |

| Fast design preference checks | Lyssna | Quick and lightweight, great for direction |

Last quarter, I ran a concept test with two teams.

Team A (User Interviews):

Team B (UserCall):

AI doesn’t replace high-stakes, human-led depth interviews. But for early validation, continuous discovery, and speed-sensitive projects, async AI interviews can cut total cost dramatically and remove coordination bottlenecks.

Typical total cost is ~$150–$300 per completed interview, depending on incentives, panel difficulty (B2B vs B2C), and your team’s time.

Yes. You generally pay a platform fee per session plus the participant incentive.

Ops + time: scheduling, no-shows, rescheduling, moderation time, transcription cleanup, and synthesis.

Often yes, especially for niche B2B profiles, but costs and lead times can rise with stricter screeners.

Sometimes. If your goal is speed and volume, async AI-moderated options can be much cheaper in total cost and resources because they reduce scheduling and analysis overhead.

If you need results in 24–72 hours, and cost is not an issue.

Once you've found a pricing model that works for your team, make sure the interviews themselves are set up for success—our guide to user interview questions gives you 50+ tested examples ready to use. If you're looking for an AI-powered alternative that keeps costs predictable, explore Usercall and see how teams are cutting research costs without cutting quality.

Related: UX research interview questions · churn interview questions · user interview questions template