Most feedback programs spend time debating question wording. The bigger problem is almost always timing. Feedback collected days after an experience is worth far less than feedback collected the moment the experience ends.

Teams frequently send surveys days after an experience happened—or schedule interviews weeks later. When feedback is delayed, users forget the details of what actually happened. They generalize. Vague responses like "it wasn't the right fit" or "I just didn't need it anymore" feel complete, but they explain nothing useful.

The most useful feedback happens while the experience is still fresh, when users clearly remember what they were trying to do and what didn't work.

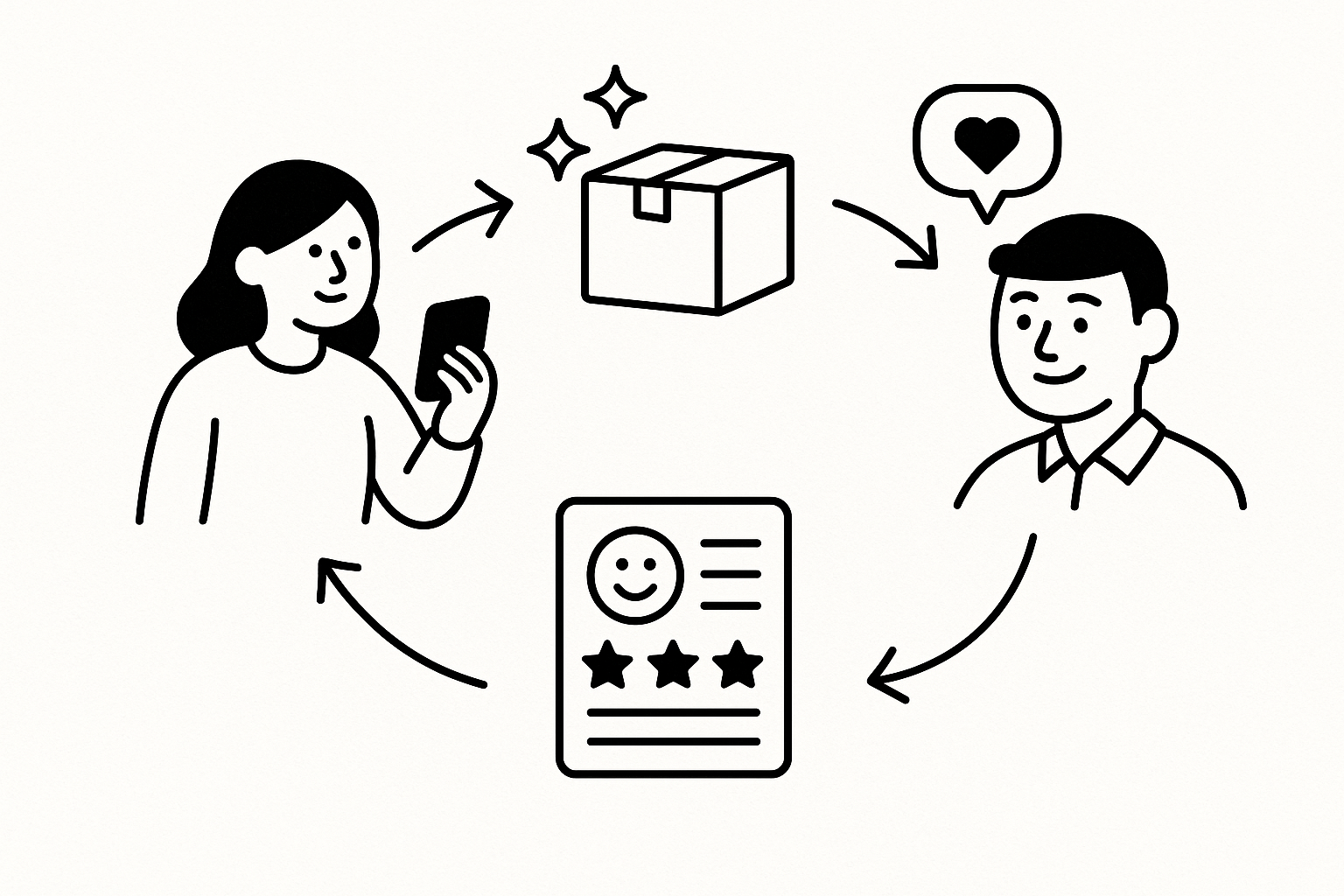

Important insights often appear during specific moments in the customer journey. These moments reveal the real reasons behind user behavior and product outcomes—not summaries users reconstruct after the fact.

Each of these moments provides a window into what customers actually experienced—not what they remember experiencing a week later.

Even teams that actively want customer feedback often end up with insight that arrives too late to act on. The collection methods work—the timing doesn't. As a result, teams understand what happened in their metrics, but not why it happened.

Different moments in the customer journey surface different types of insight. Knowing which signal to look for at each stage separates teams that build from evidence from teams that build from assumption.

Cancellations are the moment most teams handle worst. By the time a user cancels, the decision is made—but the reason is still fresh. A conversation started at cancellation captures why, before users construct a more polished story about fit and value. For a deeper breakdown, see Why Customers Leave: 12 Real Reasons.

New signups arrive with a specific expectation—something triggered the decision to try the product. That expectation rarely matches the product's intended positioning. Asking immediately after signup reveals what users hoped to accomplish, which is far more useful than what the product team assumes they want.

Onboarding drop-off is rarely about confusion with a single UI element. It's usually about the user's mental model not matching the product's flow. Catching users at the drop-off point—not after—lets you ask what they expected to happen next. See also: Why Users Drop Off During Onboarding.

Activation failure is the gap between account creation and first meaningful use. Users who sign up and never engage often had a clear intent the product didn't meet in the first session. The insight isn't in the analytics—it's in what users say they were trying to do when they gave up.

Feature abandonment usually signals a gap between the feature's design and the user's actual workflow. Asking at the moment of last use—before the memory degrades—surfaces what users expected the feature to do and what it failed to deliver.

Users who stay on a free or trial plan indefinitely made a decision not to upgrade. That decision has a specific reason. Asking at the moment a user declines or ignores an upgrade prompt captures the real objection—before they rationalize a more polished story about value and fit.

Checkout abandonment isn't just a pricing problem. It's often trust, uncertainty about commitment terms, or a question that went unanswered at the wrong moment. An exit conversation at abandonment reveals the specific friction—not an aggregated guess. Related: Why Users Abandon Checkout.

When a user hits an error or failure, they have a clear memory of what they were trying to do. That context disappears fast. Capturing it immediately reveals not just the bug report, but the task the bug interrupted—which is often more important for prioritization.

Support requests are usually symptoms of a deeper product problem. The user asking "how do I do X" often reveals that X is either too hard to find or fundamentally unintuitive. Treating support as a feedback channel—not just a resolution channel—surfaces the patterns behind individual tickets.

Traditional methods—surveys, NPS questionnaires, scheduled user interviews, customer support analysis—can produce useful data. The problem isn't the methods. It's that these methods almost always happen after the moment has passed. Important context about the experience disappears before the question is ever asked.

When a key product event triggers a feedback invitation, users respond while the experience is still accurate in their memory. A user who just abandoned onboarding doesn't need to reconstruct what happened. They know exactly what confused them, what they expected next, and what caused them to stop.

This is the difference between research that explains metrics and research that can reproduce them—specific, actionable, collected from a user who still remembers the session.

When feedback is tied to key moments in the customer journey, every product event becomes a research opportunity. Teams stop running quarterly research cycles and start accumulating insight continuously.

Instead of quarterly research reports, teams build a living picture of user behavior—updated continuously, grounded in real moments. That's what turns product analytics from a "what happened" dashboard into a "why it happened" system.

Immediately after an important moment in the user journey—signing up, abandoning onboarding, completing a purchase, or contacting support. The closer to the experience, the more specific and useful the feedback.

When feedback is collected right after an experience, users remember what actually happened. When it's delayed, they summarize and rationalize—which produces accurate-sounding but unhelpful answers like "it wasn't the right fit."

Signup, onboarding drop-off, feature abandonment, cancellations, checkout abandonment, product errors, and support interactions. Each moment has a specific question it can answer that no other moment can.

By triggering feedback invitations when key product events occur. When a user abandons onboarding, cancels a subscription, or ignores an upgrade prompt, an automated trigger starts a conversation while the experience is still accurate in memory. See When to Ask Users for Feedback for more on the mechanics.

Knowing when to ask is only half the equation—the format and tool you use determines whether you get honest answers or polished noise. Our customer feedback survey software guide breaks down what works, what doesn't, and what to use at each stage. Usercall can help you reach users at the right moment with the right kind of question.

Related: how to collect customer feedback at key moments in the customer journey · onboarding feedback survey questions to improve activation and retention · customer survey questions that reveal why users stay, churn, or convert